- Neither an Engine, Nor an Expert, Nor a Database: The Illusion of Mastery, driven by sophisticated machine learning.

- What exactly is predictive AI?

- Humans Confronting Unconscious Imitation

- Why can predictive AI create an illusion of understanding?

- What are the main risks of trusting predictive AI without verification?

- Truth and Falsehood: A Task That Remains Human

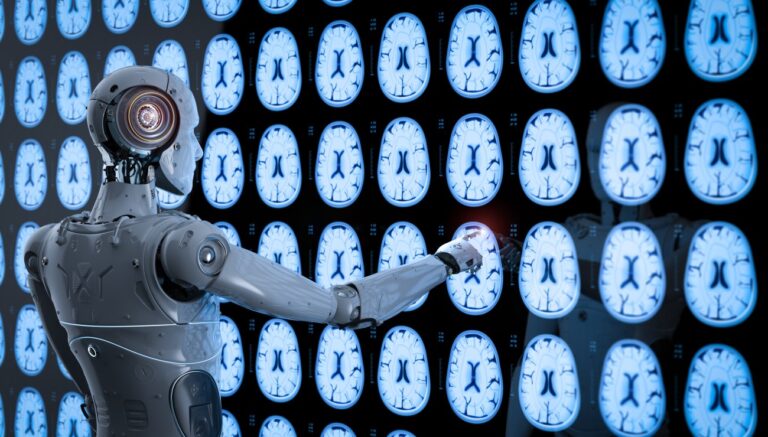

Artificial intelligence is neither an infinite memory nor a thinking mind in the human sense. It is, above all, a formidable statistical prediction engine. Specifically, it anticipates the most probable continuation of a given sequence, drawing on billions of already processed data points and patterns it has identified. This isn’t deterministic logic, where input A inevitably produces output B, as in classic software. No, here, it’s a complex dance of probabilities. Hence this strange impression, this disconnect: a response generated by predictive AI might seem perfect and accurate, but it sometimes conceals a fragile approximation, or even a pure fabrication. Experts Frank Ng and Ryan Ng rightly point out: AI models infer from past data. They don’t create new truths; they reproduce and recombine existing patterns. And boom, our intuition, accustomed to the rigor of traditional digital tools, takes a hit, pushing us towards potentially blind trust.

These models infer from past data.

Neither an Engine, Nor an Expert, Nor a Database: The Illusion of Mastery, driven by sophisticated machine learning.

Forget the idea that Predictive AI is a giant database, a supercharged search engine, or a seasoned expert who has read and verified everything. It doesn’t store validated facts or point to reliable sources like Wikipedia. Instead, its machine learning models identify data patterns to generate responses, rather than containing ready-made answers like an encyclopedia. That’s not how it works. Its talent lies in crafting fluent, ultra-credible text by assembling words based on statistical probabilities. This ability to produce phrases that “sound right” creates a powerful illusion of mastery. We get the impression of interacting with an entity that knows. Yet, even engineers refer to these systems as “black boxes” because certain internal decisions remain opaque and difficult to explain. It’s frankly frustrating, isn’t it? But that’s the technical reality. Consequently, we project expectations onto it that it cannot meet, amplifying the risk of misunderstandings and errors.

The Silent Risk of Blind Trust

The fluidity and confidence of AI-generated responses can lead us to believe without verifying. This projection of erroneous expectations is a major trap for anyone using these systems daily, from Sophie the product manager to Leah the student.

What exactly is predictive AI?

Predictive AI, at its core, is a sophisticated statistical model designed to identify and extrapolate patterns from vast datasets. Unlike a traditional database that stores explicit facts, or a rule-based system that follows predefined logic, predictive AI operates by calculating probabilities. It learns the likelihood of certain data points appearing in sequence or in relation to others, based on the examples it has been trained on. This allows it to forecast future data, complete sequences, or generate new content that statistically resembles its training material. A prime example of Predictive AI in action is the large language model (LLM), a type of generative model that powers many modern chatbots and content generators. These models don’t “understand” language in a human sense; instead, they anticipate the most probable next word or token in a sequence, given the preceding context. This process, driven by complex neural networks and billions of parameters, enables them to produce coherent and contextually relevant text, images, or even code, purely through statistical inference. Their “intelligence” stems from their ability to process and generalize from an immense volume of data, rather than from genuine comprehension or reasoning.

Humans Confronting Unconscious Imitation

AI imitates our language with astonishing precision. It captures style, tone, grammar, sometimes even subtle nuances. But hold on a second: it understands neither the deep meaning nor the real intent behind your words. Every sentence it generates is a statistical sequence, a probable chain drawn from millions of observed examples. It possesses no consciousness, no capacity for introspection. It doesn’t know what it’s saying. It’s like an exceptionally gifted parrot repeating complex sentences without grasping their essence or contextual meaning. Consequently, AI can give you two opposing answers, both credibly formulated. The absence of an internal consciousness to validate the overall coherence or ethics of its statements is the game-changer. It explores linguistic possibilities but doesn’t judge their relevance to the real world. Alex, the dev who codes for hours, knows this well: you always have to re-read the machine.

The Reimagined Human: Faced with this power of imitation, our role changes. We must become “reimagined humans,” capable of doubting, verifying every piece of information, and distinguishing mere statistical plausibility from factual, verified knowledge.

Why can predictive AI create an illusion of understanding?

Predictive AI, at its core, excels at pattern recognition and statistical inference. It analyzes vast datasets of human language, identifying the most probable sequences of words that follow a given input. This sophisticated statistical modeling allows it to construct sentences that are grammatically correct and contextually relevant, mimicking the flow and coherence of human conversation. The sheer volume of data it’s trained on enables it to anticipate what a human might say next, leading to responses that *appear* thoughtful and informed.

What are the main risks of trusting predictive AI without verification?

Trusting predictive AI without verification carries significant risks, primarily stemming from its inherent lack of true understanding and its reliance on statistical patterns. One major danger is the propagation of misinformation and “hallucinations,” where the AI confidently presents false or nonsensical information as fact because it statistically resembles plausible data from its training. This can lead individuals to make ill-informed decisions, spread inaccuracies, and erode trust in reliable information sources. Furthermore, Predictive AI can inadvertently amplify existing biases present in its training data, leading to discriminatory or unfair outputs through algorithmic bias. If the data reflects societal prejudices, the AI will learn and reproduce these biases, potentially impacting critical areas like hiring, loan applications, or even legal judgments. Over-reliance on AI also risks diminishing human critical thinking skills and the ability to discern truth from falsehood, as users become accustomed to receiving seemingly authoritative answers without questioning their veracity. The ultimate consequence is a potential degradation of informed discourse and a weakening of our collective capacity for independent judgment.

Truth and Falsehood: A Task That Remains Human

The crucial question: can AI distinguish truth from falsehood? The answer is a categorical no. It has no built-in truth detector, no moral compass, no capacity for factual cross-referencing. It gives you the statistically most probable answer, the one that best fits the patterns it has learned, without concern for its factual validity or accuracy. According to Statista, in 2023, the percentage of internet users worldwide who expressed concern about the accuracy of information generated by AI was 59%. A proven fact and a blatant absurdity can emerge with the same confident tone, the same syntactic fluidity. For the machine, there is no “truth” in the human sense, only correlations and statistical links within the training data. And this is where our role becomes central. It is up to us, users, to take over the verification process. Marc, the CEO worried about his teams, knows that proper AI usage training is key. Every piece of information derived from AI must pass through the filter of human verification, common sense, and cross-referencing. This is the new responsibility that falls on all of us. And that fundamentally changes our relationship with information. This new paradigm pushes us to develop a sharp critical mind, a form of discernment that our previous tools did not demand. Content production is accelerated, access to information facilitated, but the burden of validation falls on us more than ever. Are we ready to embrace this role of a reimagined human, capable of dialoguing with the machine without ever blindly entrusting it with our judgment?

Chargement de la galerie…